Query Optimizer Deep Dive—Part 4

This is the final part in a series of posts based on the content of the Query Optimizer Deep Dive presentations I have given over the last month or so at the Auckland SQL Users’ Group, and SQL Saturday events in Wellington, New Zealand and Adelaide, Australia.

Beating the Optimizer

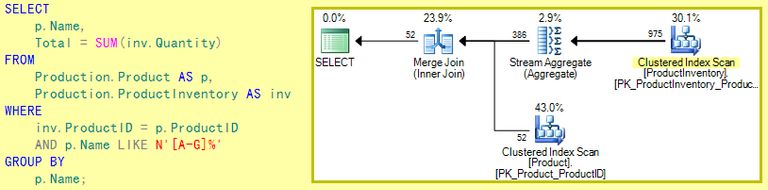

Our AdventureWorks test query produces an optimized physical execution plan that is quite different from the logical form of the query.

The estimated cost of the execution plan shown below is 0.0295 units.

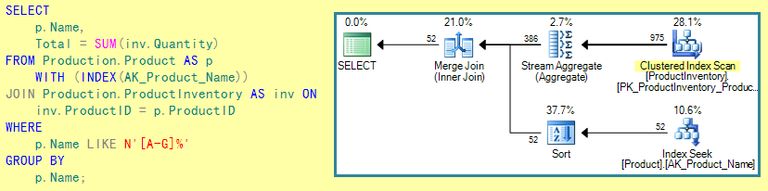

Since we know the database schema very well, we might wonder why the optimizer did not choose to use the unique nonclustered index on Name in the Product table to filter rows based on the LIKE predicate. We could use an index hint to force the name index to be used:

That’s great, and no doubt the index seek is cheaper than the scan we had previously, but the optimizer has still chosen to use a merge join, and that means having both inputs sorted on ProductID.

The result of the Index Seek is ordered by Name (the index key) rather than ProductID, so a Sort is required. It looks like the new sort adds a little more cost than the seek saves over the scan, because the estimated cost of the query plan with the index hint is 0.0316 units.

Naturally, these numbers are rather small since AdventureWorks is not a large database, but these differences can be important in real systems.

Anyway, let’s persist with the index seek idea; Why is the optimizer so keen on a Merge Join, even though it involves an extra Sort, and we don’t have an ORDER BY Product ID clause on our query? Without a top-level ORDER BY, we are giving the optimizer the freedom to return results in any order that is convenient.

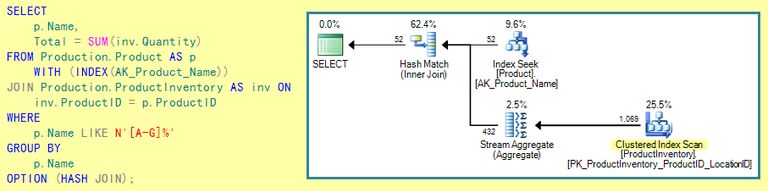

Perhaps we can do better by forcing the index seek and a Hash Join instead of a Merge Join?

Well the Sort has gone, so the plan looks visually a little simpler, but the estimated cost has increased again, to 0.0348 units.

Hash Join has quite a high start-up cost (and it requires a memory workspace grant). We could try other things, but it certainly seems that the optimizer had it right to begin with in this case.

The manual exploration above shows that the optimizer does generally find a good plan quickly (and sometimes it may even find the best possible plan). The terms ‘good’ and ‘best’ here are measured in terms of the optimizer’s own cost model.

Whether one particular plan shape actually executes faster on a given system is another question. I might find, for example, that the first Merge Join plan runs fastest for me, whereas you might find the Seek and Sort runs fastest for you.

We might both find that which is faster depends on whether the indexes and data needed are in memory or need to be retrieved from persistent storage.

All these things aside, the important thing is that we are both likely to find that the optimizer’s plans are pretty good most of the time.

Models and Limitations

There are three principal models used by the optimizer to provide a basis for its reasoning: Cardinality estimation, logical relational equivalences, and physical operator costing.

Good cardinality estimation (row count expectations at each node of the logical tree) is vital; if these numbers are wrong, all later decisions are suspect. Fortunately, it is relatively easy to check cardinality estimation by comparing actual and estimated row counts in query plans. There are some subtleties to be aware of, for example when interpreting the inner side of a nested loops join.

The model used in cardinality estimation is complex, and contains all sorts of hairy-looking formulas and calculations. Nevertheless, it is still a model, and as such will deviate from reality at least to some extent. I’ll have more to say later on about what we can do to help ensure good cardinality estimates, but for now we will just note that the model has its roots in relational theory and statistical analysis.

Relational equivalences (such as A inner join B = B inner join A) are the basis for exploration rules in the cost-based optimizer. Not all possible relational transformations are included in the product (remember the goal of the optimizer is to find a good plan quickly, not to perform an exhaustive search of all possible plans).

As a consequence, the SQL syntax you use will often affect the plan produced by the optimizer, even where different SQL syntaxes express the same logical requirement. Also, the skilled query tuner will often be able to do better than the optimizer, given enough time and perhaps a better insight to the data. The downside of such manual tuning is that it will usually require manual intervention again in the future as data volumes and distributions change.

Physical operator costing is very much the simplest of the three models, using formulas that have been shown to produce good physical plan selections for a wide range of queries on a wide range of hardware. The exact numbers used probably do not closely model your hardware or mine, but luckily that turns out not to be too important in most cases. No doubt the models will be updated over time as new hardware trends continue to emerge.

Assumptions

All models make simplifying assumptions, and the cardinality estimation and costing models are no different. Some things are just too hard to model, and other things just haven’t been incorporated into the model yet. Still other things could be modelled, but turn out not to add much value in practice and cost too much in terms of complexity or resource consumption. Some of the major simplifying assumptions that can affect real plan quality are:

-

All queries start executing with a cold cache

This isn’t as crazy as it sounds. Fetching data from disk tends to dominate the overall cost of a query, modelling the amount of data that can be expected to be in cache already is hard, and this assumption does at least affect everything equally. The optimizer does contain some logic to account for pages that might be in cache after the first access.

-

Statistical information is independent

Correlations do frequently exist between columns in real databases, so this assumption can be problematic. Multi-column statistics, filtered indexes, and indexed views can sometimes help with this. The later cardinality estimator (released with SQL Server 2014) has made some moves away from complete independence.

-

Distribution is uniform

This is assumed where the system has no information to the contrary. One example: costing assumes that seeks (lookups) into an index are randomly distributed throughout the full range of the index.

Helping the Optimizer

There are three approaches to working with the optimizer:

- Ignore it. It works well out of the box with default settings and automatic statistics.

- Override it using syntax tricks, hints, and plan guides.

- Provide it with the best information you can, and override it for just a small number of problematic queries.

All three are valid approaches in different circumstances, though the third option is probably the one to recommend as the best starting point. There is much more to helping the optimizer than just making sure statistics are up-to-date, however:

Use a relational design

This is probably the biggest single item. The various models all assume that your database has a reasonably relational design, not necessarily 3NF or higher, but the closer your structures are to relational principles, the better.

Cardinality estimation works best with simpler relational operations like joins, projects, selects, unions and group by. Avoid complex expressions and non-relational query features that force cardinality estimation to guess. Also remember that the cost-based optimizer’s exploration rules are based on relational equivalences, so having a relational design and writing relational queries gives you the best chance of leveraging the hard work that has gone into those rules.

Some features (e.g. ranking functions) operate on sequences rather than multi-sets (hence the Sequence Project physical operator); the original rules all work with multi-sets, and the seams between the two approaches often show through.

Use constraints

Use check constraints, foreign keys, unique constraints to provide the optimizer with information about your data. Simplification and exploration rules match patterns in the logical tree, and may also require certain properties to be set on the matched nodes.

Constraints and keys provide some of the most powerful and fundamental logical properties – omitting these can prevent many of the optimizer’s rules from successfully transforming to an efficient physical plan.

If an integer column should only contain certain values, add a check constraint for it. If a foreign key relationship exists, enforce it. If a key exists, declare it. It is not always possible to predict the benefits of providing this type of optimizer information, but my own experience is that it is much greater than most people would ever expect.

Statistics, indexes, and computed columns

Provide more than just default-sampled automatically-created statistics where appropriate (for example where distribution is unusual or correlations exist).

Create indexes that will provide potentially useful access paths for a wide range of queries. If you have expressions on columns in your WHERE clause, consider adding a computed column that matches the expression exactly.

A computed column does not have to be persisted or indexed to be useful; the system can auto-create statistics on the computed column, avoiding cardinality guessing.

Deep Trees

Large, complex queries produce large, complex logical trees. Any errors tend to multiply (perhaps exponentially) as the size of the tree increases, the search space of possible plans expands greatly, and things just become much more difficult in general.

Breaking a complex query into smaller, simpler, more relational, steps will generally get greater value out of the optimizer. A side benefit is that small intermediate results stored in a temporary table can have statistics created, which will also help in many cases.

There will always be cases where a large complex query is required for ultimate performance, but very often the performance difference is relatively small and may not be worth the future maintenance costs as hand-tuned monolithic queries tend to be fragile.

Opaque operators and new features

User-defined functions (other than the in-line variety) may seem convenient, but they are almost completely opaque to the optimizer—it has to guess at the cardinality and distribution of rows produced. This may improve in many cases with the release of SQL Server 2019, which is slated to support inlining of many scalar functions.

Newer features also tend to have much shallower support in the engine than longer-established features that have been around for multiple versions of the product. By all means use all the shiny new features, just be aware that combining them may produce plans of variable quality.

Trace Flags

To summarize the flags used in this series (all assume 3604 is also active):

| Flag | Description |

|---|---|

| 7352 | Final query tree |

| 8605 | Converted tree |

| 8606 | Input, simplified, join-collapsed, and normalized trees |

| 8607 | Output tree |

| 8608 | Initial memo |

| 8615 | Final memo |

| 8675 | Optimization stages and times |

The above were used in the presentation because they all work from 2005 to 2019. There are a large number of other optimizer-related flags (some of which only work on specific versions). A few are listed below for people that like this sort of thing:

| Flag | Description |

|---|---|

| 2373 | Memory use deriving properties and rules (verbose) |

| 8609 | Task and operation type counts |

| 8612 | Add cardinality and cost |

| 8619 | Apply rule with description |

| 8620 | Add memo arguments to 8619 |

| 8621 | Rule with resulting tree |

| 8670 | Skip search 2 |

| 8677 | Run search 2 |

| 8750 | Skip search 0 |

| 8757 | Skip trivial plan |

As usual, these are undocumented, and unsupported (including by me!) and purely for educational purposes. Use with care, and at your own risk.

Final Thoughts

I hope you have gained some insight and intuition for the workings of the query optimizer: logical trees, simplification, cardinality estimation, logical exploration and physical implementation. The geeky internals stuff is fun, of course, but I rather hope people came away from these sessions with a better understanding of how query text is transformed to an executable plan, and how relational designs and simpler queries can help the optimizer work well for you.

Taking advantage of the optimizer frees up time for more productive things – new projects, tuning the odd interesting query, whatever it is you would rather be doing than fighting the same query-tuning battles over and over again.

There are some great resources out there to learn more about SQL Server. Make full use of them to continue to improve your technical skills and just as importantly, experiment with things to build your experience level. Take a deeper look at query plans and the properties they contain; there is a wealth of information there that sometimes requires a bit of thinking and research to understand, but the insights can be invaluable.

Scripts used are available on GitHub.